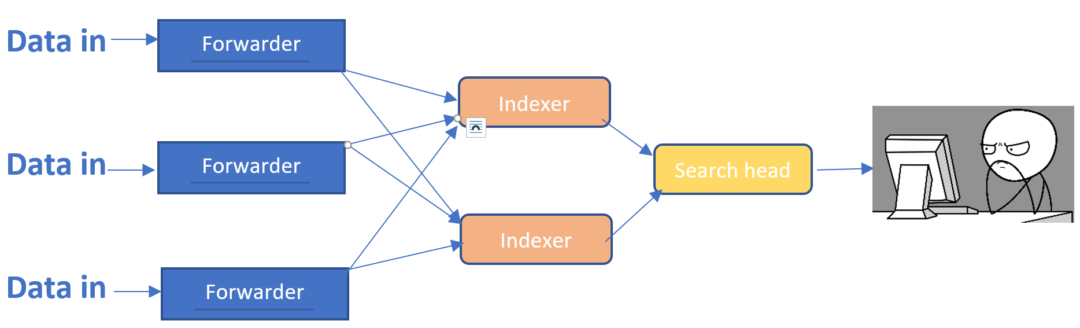

Hands-on experience with API integration across applications, networks, and cloud environments.Solid understanding of data flow, data formatting/normalization, logging best practices and data forwarding between various security controls.Experience with developing and improving data pipelines.Understanding of networking protocols and network-level troubleshooting.Experience with SIEM technologies – implementation, tuning, troubleshooting.Familiarity with both Windows and Linux OS (RHEL, CentOS, Ubuntu).Hands-on experience supporting/developing enterprise technology and network infrastructure.DNS vs IP tables, usage of SSL, etc) and underlying platform requirements. underlying server specs), inter-component communications and tradeoffs (e.g. Indexer loads and requirements, search head clustering, etc), component resourcing (e.g. Proficiency with Splunk component utilization (e.g.Proficiency with data ingest, data normalization (using community TAs, custom TAs or other solutions), search/query design and execution.Robust experience in building, deploying, scaling, and troubleshooting the various facets of large scale Splunk clusters and supporting apps.

By default, all data except internal indexes is routed out right after the Typing pipeline. Data is parsed and processed first by Splunk pipelines, and then by Cribl Stream. It receives events from the local Splunk process per routing configurations in nf and nf. When running on an HF, Cribl Stream is set to mode-hwf. Sending search results to any Destination supported by Cribl Stream.Working with search results in a Cribl Stream pipeline.Go to or and log in with Splunk admin role credentials.Get the bits here, and install as a regular Splunk app.See the Requirements section for more info. Ensure that ports 10000, 10420, and 9000 are available.Select an instance on which to install.Installing the Cribl App for Splunk on an SH In addition, several out-of-the box saved searches are ready to run and send their results to Cribl with a single click. Once received, data can be processed and forwarded to any of the supported Destinations. The command is used to forward search results to the Cribl Stream instance's TCP JSON input on port 10420, but it's also capable of sending to any other Cribl Stream instance listening for TCP JSON. It listens for localhost traffic generated by a custom command: | criblstream. When running on an SH, Cribl Stream is set to mode-searchhead, the default mode for the app. Regardless of where you run Cribl App for Splunk, if you want to send data from Cribl Stream to a set of Splunk indexers: In the Cribl Stream UI, select Data > Destinations > Splunk Load Balanced, then enter the required information. You can use Cribl App for Splunk cannot in a Cribl Stream distributed deployment as a Leader, or as a managed Worker. Depending on your requirements and architecture, it can run either on a Search Head or on a Heavy Forwarder. In a Splunk environment, you can install and configure Cribl Stream as a Splunk app (Cribl App for Splunk). See Single-Instance Deployment and Distributed Deployment for alternatives. Cribl will continue to support this package, but customers are advised to begin planning now for the eventual removal of support.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed